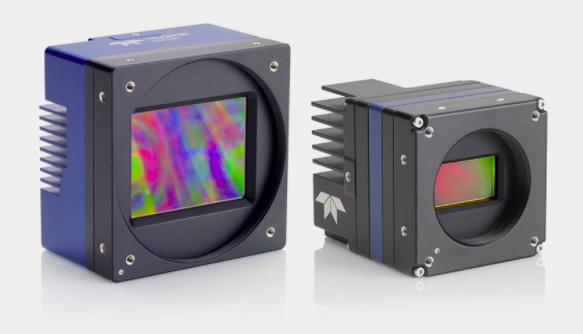

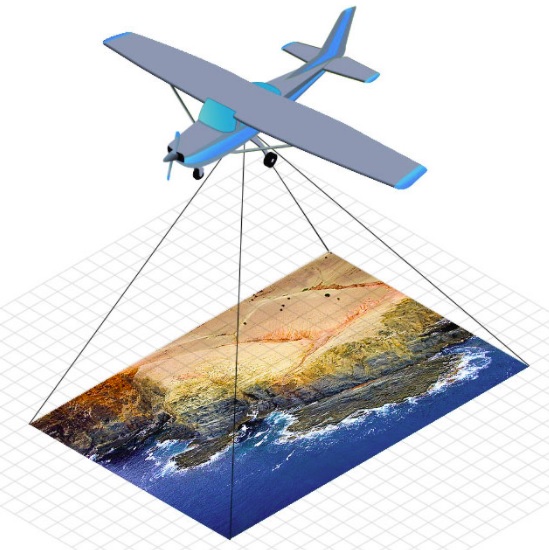

AT Sensors, previously known as Automation Technology, is a leading manufacturer of 3D laser profilers, and also infrared smart cameras. As customary among leading camera suppliers, AT Sensors provides a comprehensive software development kit (SDK), making it easy for customers to deploy their cameras. The Solution Package is available for both Windows and Linux. Read on to find out what’s included!

Let’s unpack each of the capabilities highlighted in the above graphic. You can get the overview by video, and/or by our written highlights.

Video overview

Overview

AT Sensors’ Solution Package is designed to make it easy to configure the camera(s), prototype initial setups and trial runs, proceed with a comprehensive integration, and achieve a sustainable solution.

cxExplorer

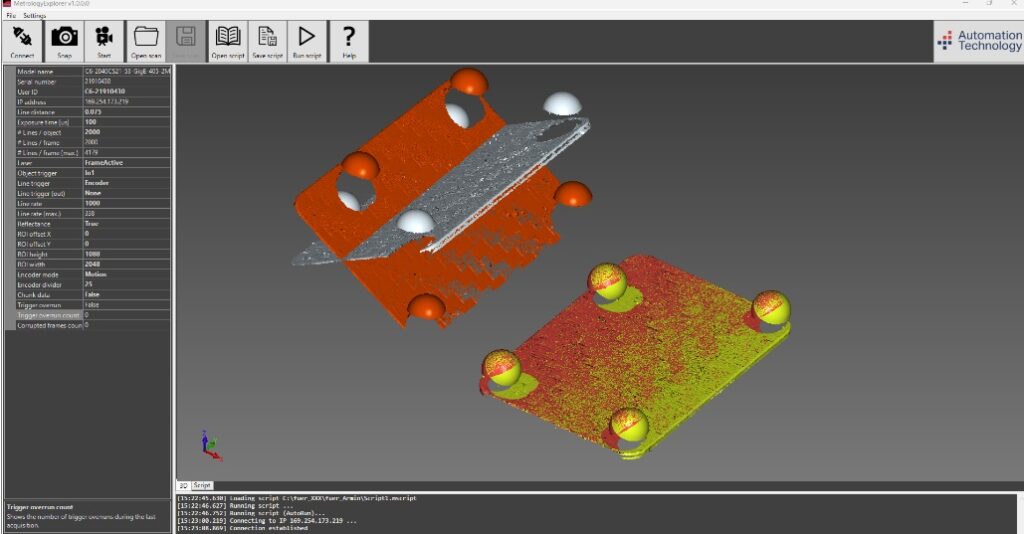

Configuration of a compact sensor can be easily done with the cxExplorer, a graphical user interface provided by AT Sensors. With the help of the cxExplorer a sensor can be simply adjusted to the required settings, using easy to navigate menus, stepwise “wizards”, image previews, etc.

APIs, Apps, and Tools

The cxSDK tool offers programming interfaces for C, C++, and Python. The same package work with all of Automation Technologies 3D and infrared cameras.

Product documentation

Of course there’s documentation. Everybody provides documentation. But not all documentation is both comprehensive and user-friendly. This is. It’s illustrated with screenshots, examples, and tutorials.

Metrology Package

Winner of a 2023 “inspect” award, the optional add-on Metrology Package can commission a customer’s new sensor in just 10 minutes, with no programming required. Then go on to create an initial 3D point cloud, also with little user effort required.

For more information about AT Sensors‘ 3D laser profilers, infrared smart cameras, or the Solution Package SDK, call us at 978-474-0044. Tell us a little about your application, and we can guide you to the optimal products for your particular needs.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of lenses, cables, NIC cards and industrial computers, we can provide a full vision solution!