High dynamic range (HDR) isn’t new. It’s frequently mentioned. And offered on-camera, or via software or FPGA. Is it just a marketing term, or a real benefit? If your application’s scenes contain both bright and dark regions, HDR can absolutely deliver benefits.

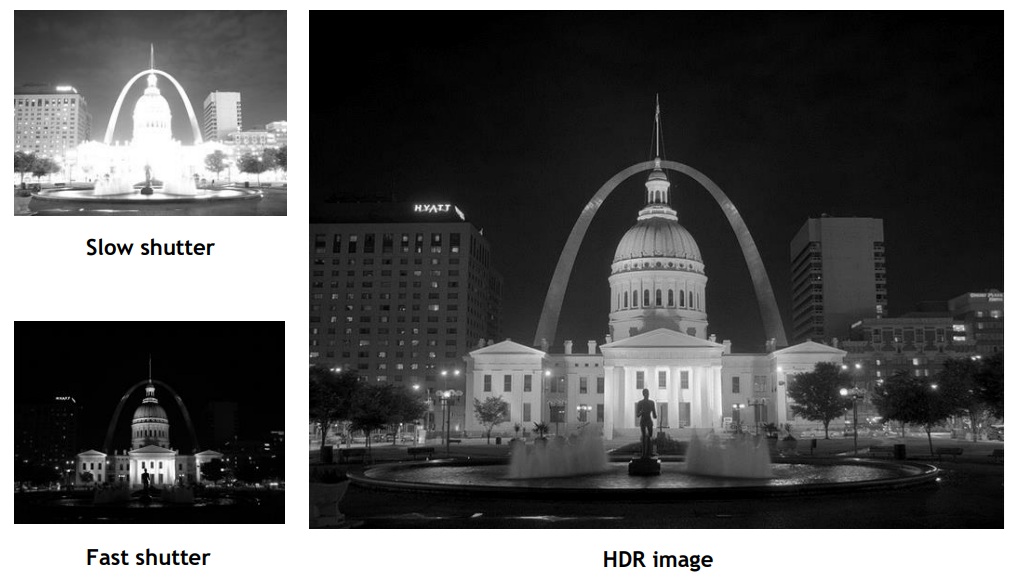

Consider the three images shown below:

Neither the “slow shutter” image nor the “fast shutter” image is optimal. The former is over-saturated – one can’t even find the many windows in the central building. The fast shutter image is of course too dark, essentially losing the arch and the flagpole. While this scene is more from the realm of “photography” than “machine vision”, the concepts are the same.

Clearly the best image is the HDR image – the lighter areas are revealed in nuanced detail, but so too the unlit trees and gray windows are clear in their own degrees of black and gray, and everything in between. How is this achieved?

What is HDR?

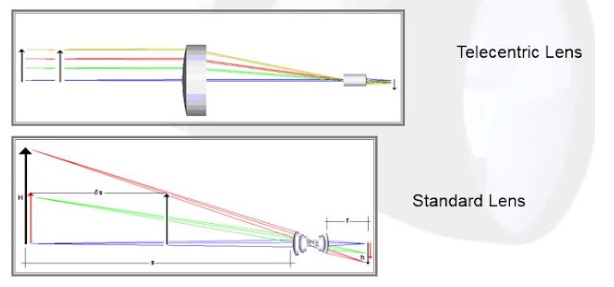

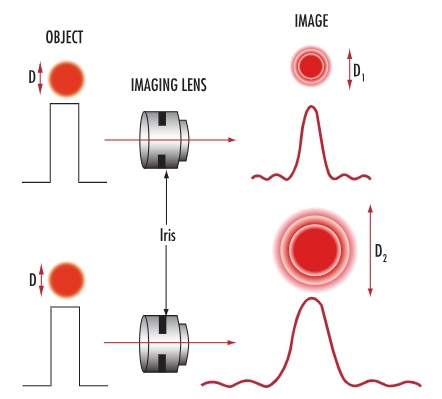

Let’s unpack the acronym, starting with DR for dynamic range. DR is the ratio between the largest and smallest measurable values, for the quantity being measured. For machine vision, it’s light intensity that’s being quantified.

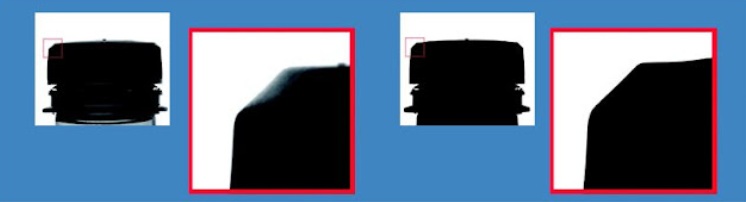

Generally speaking, a larger dynamic range is preferrable to a small one, as the nuanced differences of a relatively larger dynamic range may be required for effective image processing. Take edge-detection, a common machine vision requirement for many applications. The edge may only become apparent, under given lighting conditions and resolution, when the saturation of pixels in a given region are consistently lower to one side and consistently higher to the other side of the “emergent” edge. With sufficient dynamic range, calculated confidence grows, while poor dynamic range may fail to reveal an edge at all.

Ways to create a composite HDR image

One way to create an HDR image is with two exposures and an algorithm for creating the composite. The shorter exposure captures the more brightly lit or highly reflective surfaces, while the remaining regions remain unsaturated or only slightly registering. A longer exposure oversaturates the lighter targets, but reveal nuanced variation in the previously unrevealed details.

In fact one does the longer exposure first, such that the darker portions of the scene produce a variance of non-zero values – i.e. a dynamic range across the darker regions.

Then for the shorter exposure, use the brightest non-saturated pixels from the first exposure as a reference to generate small non-zero values as a control on the short exposure, creating a calculated point of overlap. That way many pixels that were oversaturated on the long exposure are only slightly to moderated saturated on the short-exposure, for a nuanced spread of values across the corresponding pixels.

The blending algorithm compares the two images, pixel for pixel, with the overlap point as a reference. Saturated pixels in the first image are replaced with the corresponding non-saturated pixel values from the second image.

While the two-exposure approach described above is easy to understand, there’s clearly a time-cost in taking two successive exposures, reading them both out to the PC host, and doing the image processing. For certain applications, that may be acceptable. For others, especially with motion involved, or desired high cycle counts, one might hope for a faster approach.

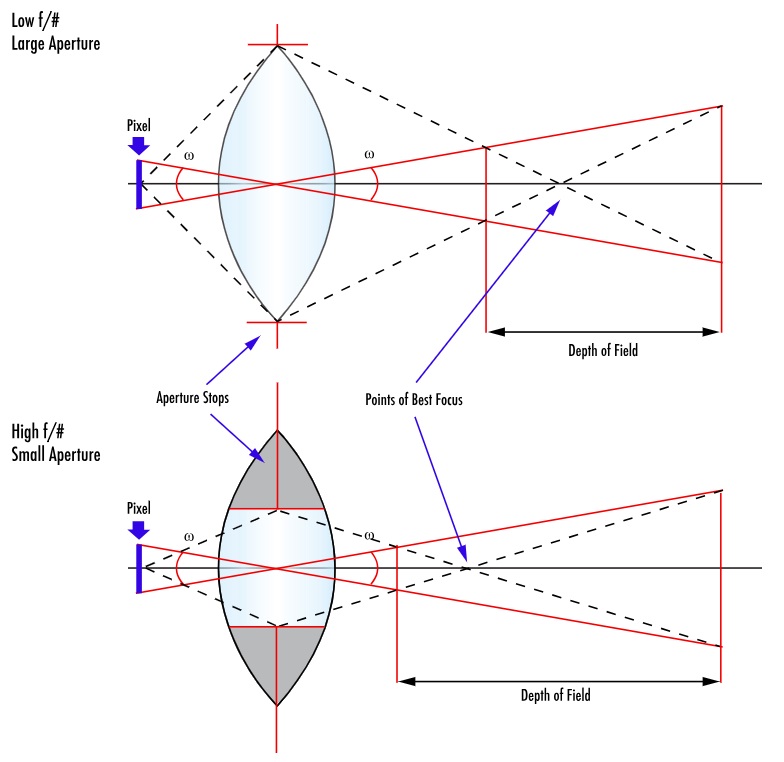

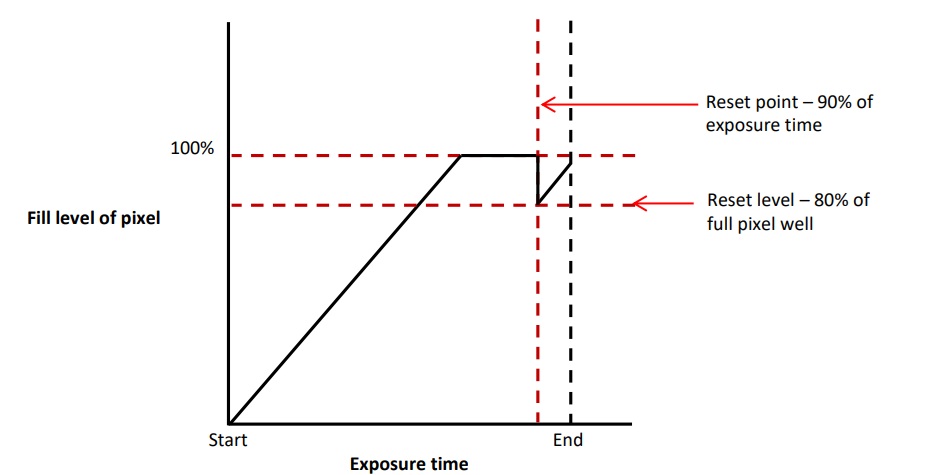

Another way: multi-slope pixel generation on CMOS sensors

The rise of CMOS sensors and their transistor-based pixel architecture enables on-sensor functionality that convenient supports the generation of HDR images. This may be achieved by resetting pixels approaching saturation, prior to end of exposure, so those pixels have an opportunity to be filled from a range of values instead of maxing out had the reset not occurred.

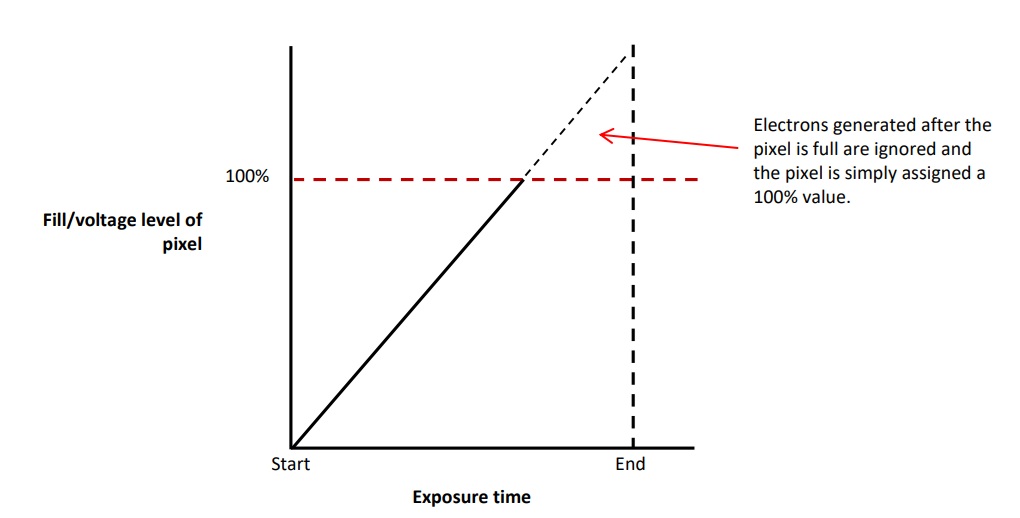

Consider the follow two diagrams, and the supporting discussion below:

But thanks to CMOS transistors at each pixel position, the sensor can be programmed to monitor saturation values, and to reset pixels approaching saturation to “partial fill” levels that allow additional fill for the remainder of the exposure.

It gets even better

Above was “intro level” HDR, concepts and techniques that provide the foundation. Meanwhile innovators keep taking it to the next level.

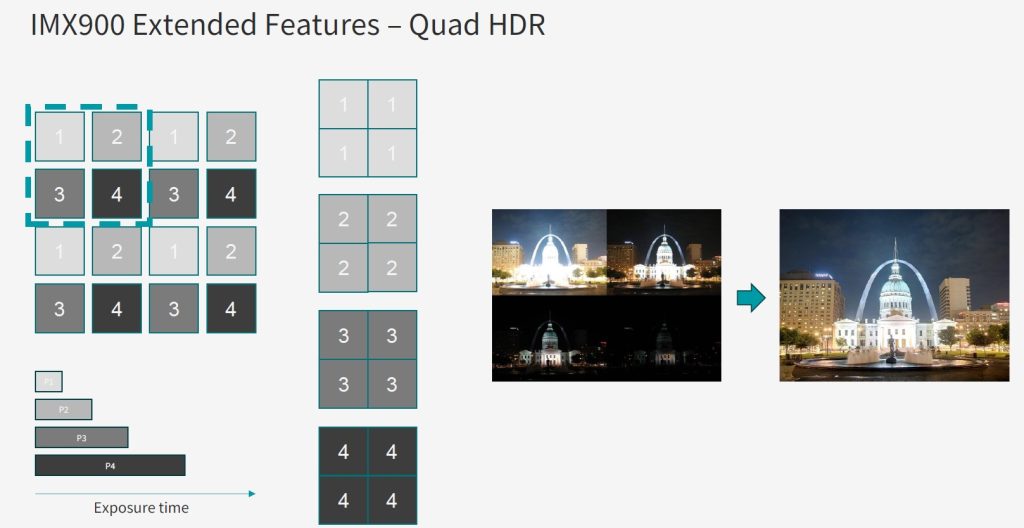

For example, Sony now offers Quad HDR on their IMX900 sensor, available in the IDS uEye low-cost cameras. Getting the dark sections sufficiently saturated while not oversaturating the brighter regions is really evident with Quad HDR below.

In the video below, you may jump to position 1 minute 42 seconds for more on Quad HDR:

Even more on HDR:

If you’d like to read a more in-depth treatment on HDR, including more example images, supporting arithmetic and graphical rational, download our whitepaper on High Dynamic Range Imaging.

Or perhaps you have an application with known nuanced dark regions as well as variation in the saturated areas, for which HDR may add value. Should you do it on-camera/sensor? In an FPGA/frame-grabber? On the PC host? Use lighting techniques to avoid needing HDR altogether? There are a number of different ways to achieve optimal image outcomes, but HDR is certainly a valuable technique for some applications.

Call us at 978-474-0044, and let us guide you to a best-fit solution.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of cameras, lenses, cables, NIC cards and industrial computers, we can provide a full vision solution!

About you: We want to hear from you! We’ve built our brand on our know-how and like to educate the marketplace on imaging technology topics… What would you like to hear about?… Drop a line to info@1stvision.com with what topics you’d like to know more about.

#HDR

#Highdynamicrange