We introduced these event-based cameras in a previous blog – still a great entry point and overview. In this new blog we’ll highlight use cases. They are pretty compelling.

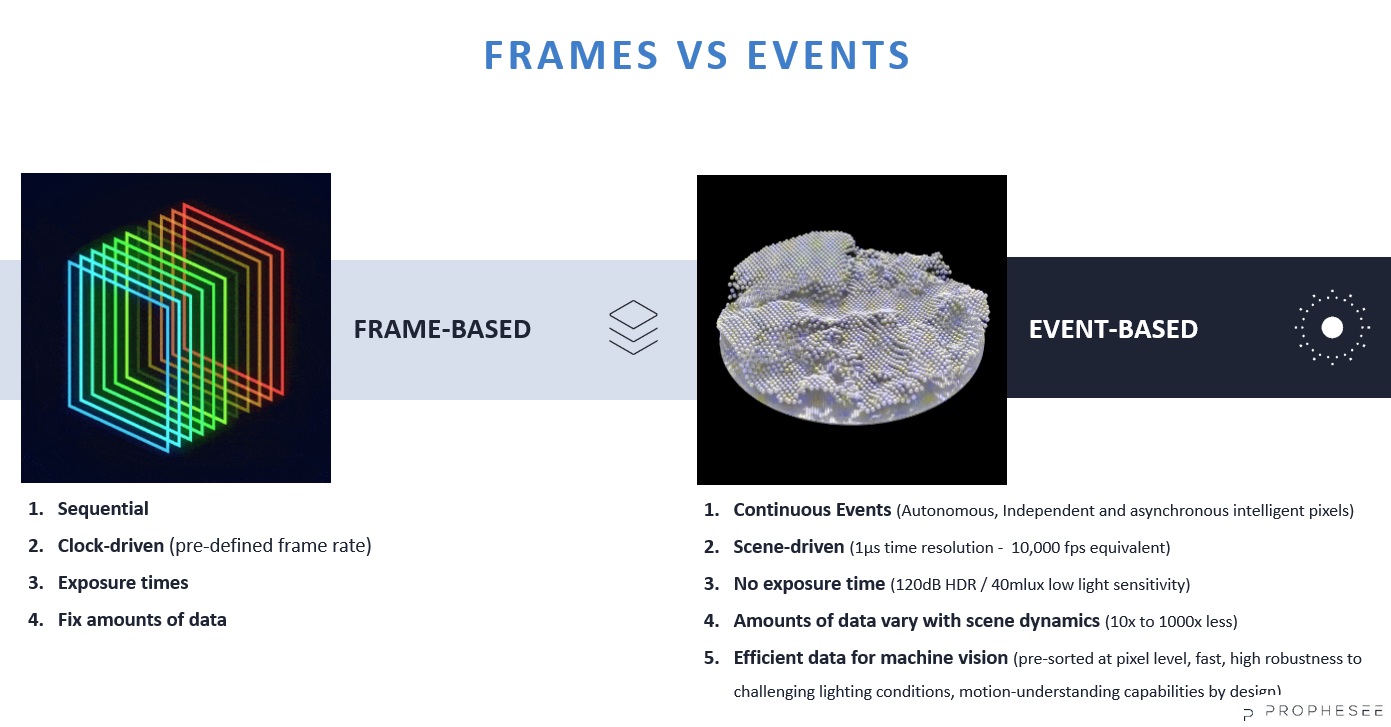

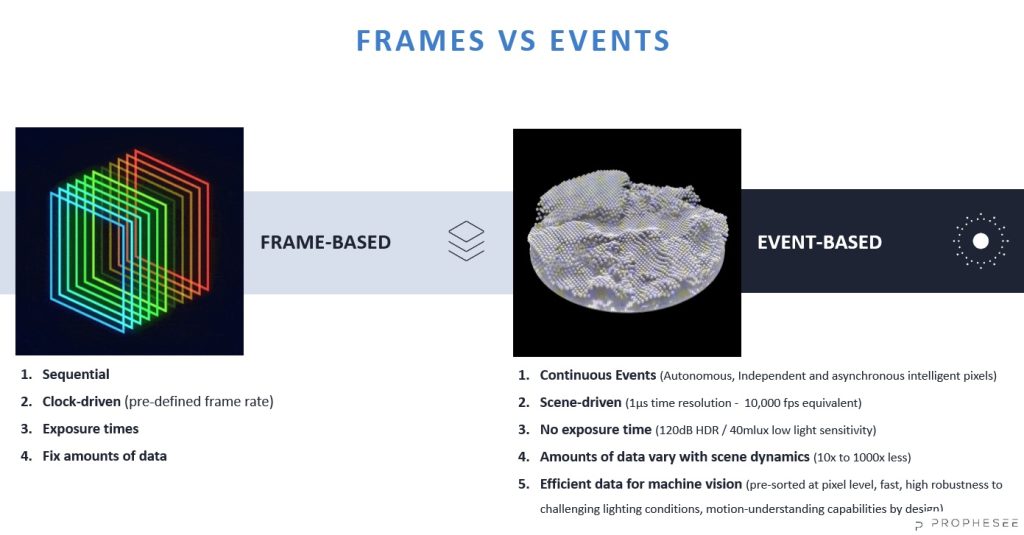

But first we re-run a single graphic to highlight the paradigm shift from frame-based to event-based imaging:

If you come from a frame-based imaging background – as most of us do – it’s worth getting one’s head wrapped around the event based model. It’s that different – at the technology level and in what it enables at the applications level.

On to use cases and key takeaways…

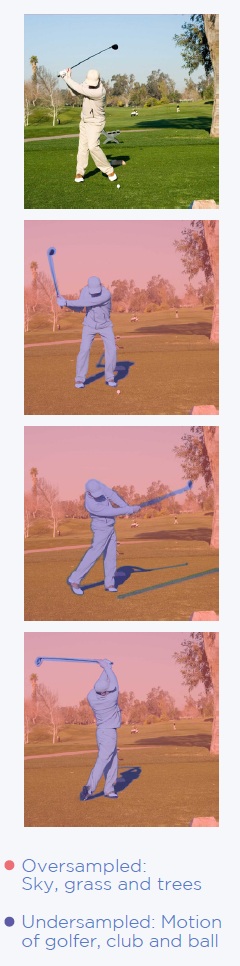

Results instead of raw data: Per the scene-driven remark in the paradigm comparison graphic above, observe the video analysis clip below. By ONLY picking up on motion, the camera delivers exactly and only what one wants – the people and suitcases passing through the field of view.

A frame-based approach to such an application would require complex algorithms to identify the “moving stuff” from the “background stuff”, which is compute intensive. It may be doable the hard way, but it takes effort – and isn’t as performant.

Extremely high dynamic range

See in the dark. The Sony Prophesee IMX636 sensor recognizes contrast changes even from 0.08 lux.

Detect extremely fast processes

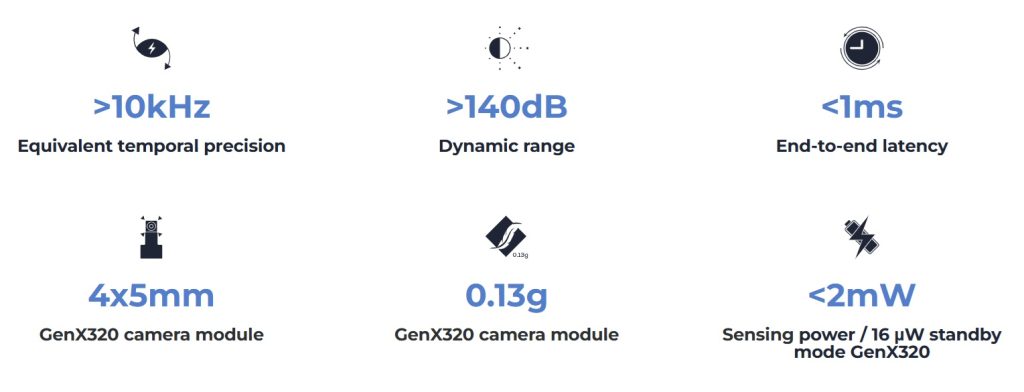

Temporal resolution <100us. i.e. the minimum measurable time difference between two consecutive pixel events, is less than 100µs. That’s comparable to a traditional image-based frame rate of more than 10,000 FPS without motion blur.

blah blah

Efficient data processing

Only changes are captured – static areas are ignored. So there is (much) less data to process than with a frame-based approach. This saves memory, data transfer volumes, and compute time.

The astute reader will have already inferred that this is a corollary on the “results instead of raw data” message and video earlier in this blog. It’s such a key point it bears repeating.

The following short video shows that the Sony Prophesee IMX636 is the key to sending less data, as it only senses “what’s changed”. Essentially it lights up a pixel exactly and only when that position senses motion – and not when it doesn’t.

Use cases

Some of the videos above suggest certain use cases, but let’s spell out a few:

Monitoring: Compared to CCTV, the IDS uEye XCP-E cameras are more compact, and only show action as opposed to (also) steady-state. Or combine the two with event-based cameras logging the timestamps of interest.

Video analysis and Smart City people tracking: A level up from simple monitoring, people tracking doesn’t just detect motion but infers/projects trajectories, and may lead or assist in threat detection.

Drone detection: Just as with people tracking, an event-based camera finds what’s moving against a field of static clutter, as it only sees what’s moving.

Gesture recognition: UI design opportunities, whether for pupil tracking, head motions, and/or hand/finger tracking.

Industrial applications: Monitor equipment vibration to optimize preventative maintenance and/or anticipate and avoid catastrophic breakdown.

Counting: E.g. pill production and sorting, food processing, or other fast-but-small-items conveyor applications.

Takeaway: If it moves, an event-based camera will find it.

See the entire family of IDS uEye XCP-E cameras. Call us at 978-474-0044. Tell us a little about your application and we’ll help you pick the ideal camera and accessories.

#IDS #uEye #EventBased

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of cameras, lenses, cables, NIC cards and industrial computers, we can provide a full vision solution!

About you: We want to hear from you! We’ve built our brand on our know-how and like to educate the marketplace on imaging technology topics… What would you like to hear about?… Drop a line to info@1stvision.com with what topics you’d like to know more about.