Remember when machine vision pioneers got stuff done with VGA sensors at 0.3MP? And the industry got really excited with 1MP sensors? Moore’s law keeps driving capacities and performance up, and relative costs down. With the Teledyne e2v Emerald 67MP sensor, cameras like the Genie Nano-10GigE-8200 open up new possibilities.

So what? 67MP view above right doesn’t appear massively compelling…

Well at this view, without zooming in, we’d agree….

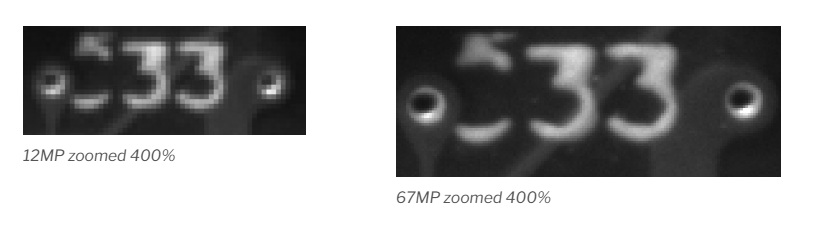

But at 400% zoom, below, look at the pixelation differences:

Both images below show the same target region, with the same lighting and lens, and each zoomed (with Gimp) to 400%. There is so much pixelation in the 12MP image to raise doubts about effective edge detection on either the identifying digits (33) or for the metal-rimmed holes. Whereas the 67MP image has far less pixelation, thereby passing a readily usable image to the host for processing. How much resolution does your application require?

Important “aside”: Sensor format and lens quality also important

Sensor format refers to the physical size of the sensor and the pixel shape and pixel density. Of course the lens must physically mount to the camera body (e.g. S, C, M42, etc.), but it must also create an image circle that appropriately covers the sensor’s pixel array. The Genie Nano-10Gige-8200 uses the Teledyne e2V Emerald 67M packs just over 67 million pixels, each square pixel just 2.5 µm wide and high, onto a CMOS sensor measuring only 59mm x 59mm.

Consider other good quality cameras and sensors, with pixel sizes in the 4 – 5 µm range, which leads to EITHER fewer pixels overall in the same size sensor array; OR to a much larger sensor to accommodate more pixels. The former may limit what can be accomplished with a single camera. The latter would necessarily make the camera body larger, the lens mount larger, and the lens more expensive to manufacture.

The lens quality, typically expressed via the Modulation Transfer Function (MTF), is also important. Not all lenses are created equal! A “good” quality lens may be enough for certain applications. For more demanding applications, one would be wasting a large format sensor if the lens’ performance fails below the sensor’s capabilities.

Two different lenses were used to take the above images, both fitting the sensor size. However the right image was taken with a lens designed for smaller pixels versus the left. – Courtesy Teledyne DALSA

The high-level views of the test chart above tease at the point we’re making, but it really pops if we zoom in. Look at the difference in contrast in the two images below!

The takeaway point of this segment is lensing matters! The machine vision field benefits users tremendously with segmented sensor, camera, lensing, and lighting suppliers. Even within the same supplier’s lineup, there are often sensors or lenses pitched at differing performance requirements. Consider our Knowledge Base guide on Lens Quality Considerations. Or call us at 978-474-0044.

Another example:

Below see the same concentric rings of a test chart, under the same lighting. The left imaged was obtained with a good 12MP sensor and good quality lens matched to the sensor format and pixel size. The right imaged used the 67MP sensor in the Genie-Nano-10GigE-8200, also with a well-matched lens.

If you need a single-camera solution for a large target, with high levels of detail, there’s no way around it – one needs enough pixels. Together with a well-suited lens.

The Genie Nano 10GigE 8200, in both monochrome and color versions, is more affordable than you might think.

Once more with feeling…

Which of the following images will lead to the more effective outcomes? Choose your sensor, camera, lens, and lighting accordingly. Call us at 978-474-0044. Our sales engineers love to create solutions for our customers.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of cameras, lenses, cables, NIC cards and industrial computers, we can provide a full vision solution!

About you: We want to hear from you! We’ve built our brand on our know-how and like to educate the marketplace on imaging technology topics… What would you like to hear about?… Drop a line to info@1stvision.com with what topics you’d like to know more about.