Lighting matters as much or more than camera (sensor) selection and optics (lensing). A sensor and lens that are “good enough”, when used with good lighting, are often all one needs. Conversely, a superior sensor and lens, with poor lighting, can underperform. Read further for clear examples why machine vision lights are as important as sensors and optics!

Why is lighting so important? Contrast is essential for human vision and machine vision alike. Nighttime hiking isn’t very popular – for a reason – it’s not safe and it’s no fun if one can’t see rocks, roots, or vistas. In machine vision, for the software to interpret the image, one first has to obtain a good image. And a good image is one with maximum contrast – such that photons corresponding to real-world coordinates are saturated, not-saturated, or “in between”, with the best spread of intensity achievable.

Only with contrast can one detect edges, identify features, and effectively interpret an image. Choosing a camera with a good sensor is important. So is an appropriately matched lens. But just as important is good lighting, well-aligned – to set up your application for success.

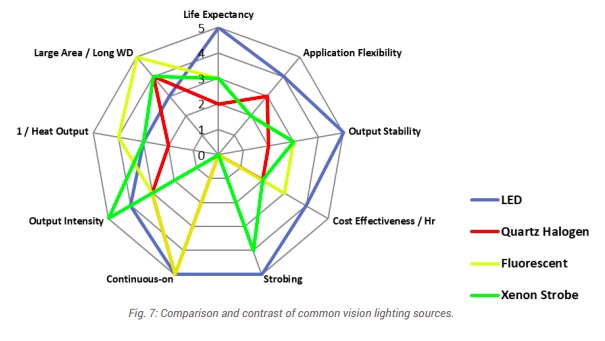

What’s the best light source? Unless you can count on the sun or ambient lighting, or have no other option, one may choose from various potential types of light:

- Fluorescent

- Quartz Halogen – Fiber Optics

- LED – Light Emitting Diode

- Metal Halide (Mercury)

- Xenon (Strobe)

By far the most popular light source is LED, as it is affordable, available in diverse wavelengths and shapes (bar lights, ring lights, etc.), stable, long-life, and checks most of the key boxes.

The other light types each have their place, but those places are more specialized. For comprehensive treatment of the topics summarized here, see “A Practical Guide to Machine Vision Lighting” in our Knowledgebase, courtesy of Advanced Illumination.

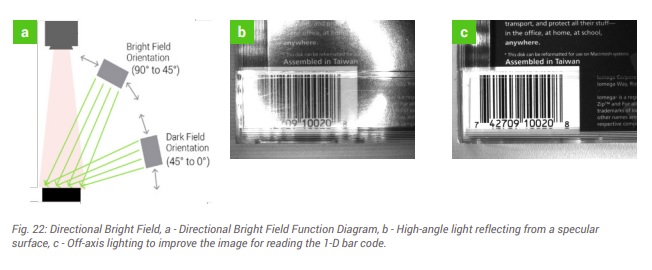

Lighting geometry and techniques: There’s a tendency among newcomers to machine vision lighting to underestimate lighting design for an application. Buying an LED and lighting up the target may fill up sensor pixel wells, but not all images are equally useful. Consider images (b) and (c) below – the bar code in (c) shows high contrast between the black bars and the white field. Image (b) is somewhere between unusable or marginally usable, with reflection obscuring portions of the target, and portions of the (should be) white field appearing more grey than white.

As shown in diagram (a) of Figure 22 above, understanding bright field vs dark field concepts, as well as the specular qualities of the surface being imaged, can lead to radically different outcomes. A little bit of lighting theory – together with some experimentation and tuning, is well worth the effort.

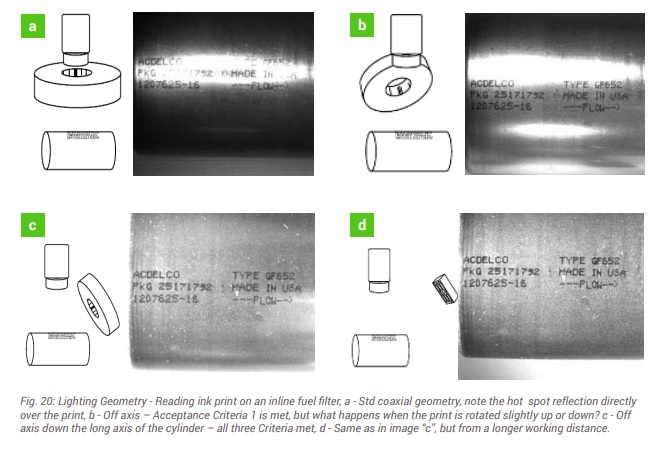

Now for a more complex example – below we could characterize images (a), (b), (c) and (d) as poor, marginal, good, and superior, respectively. Component cost is invariant, but the outcomes are sure different!

To learn more, download the whitepaper or call us at (978) 474-0044.

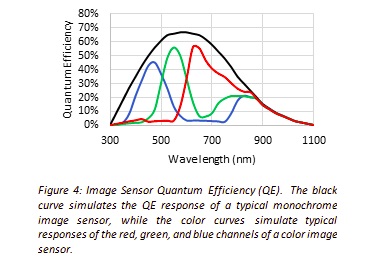

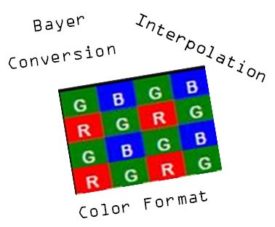

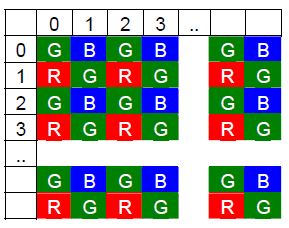

Color light – above we showed monochrome examples – black and white… and grey levels in between. Many machine vision applications are in fact best addressed in the monochrome space, with no benefit from using color. But understanding what surfaces will reflect or absorb certain wavelengths is crucial to optimizing outcomes – regardless of whether working in monochrome, color, infrared (IR), or ultraviolet (UV).

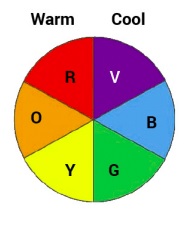

Beating the same drum throughout, it’s about maximizing contrast. Consider the color wheel shown below. The most contrast is generated by taking advantage of opposing colors on the wheel. For example, green light best suppresses red reflection.

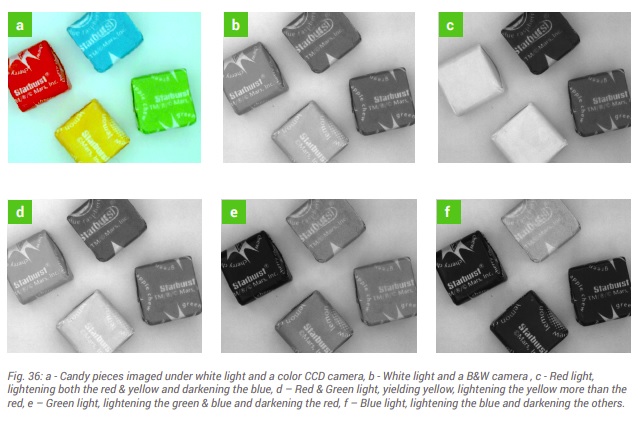

On can use actual color light sources, or white light together with well-chosen wavelength “pass” or “block” filters. This is nicely illustrated in Fig. 36 below. Take a moment to correlate the configurations used for each of images (a) – (f), relative to the color wheel above. Depending on one’s application goals, sometimes there are several possible combinations of sensor, lighting, and filters to achieve the desired result.

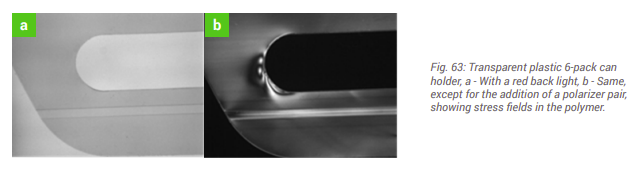

Filters – can help. Consider images (a) and (b) in Fig. 63 below. The same plastic 6-pack holder shown is shown in both images, but only the image in figure (b) reveals stress fields that, were the product to be shipped, might cause dropped product, reduced consumer confidence in one’s brand. By designing in polarizing filters, this can be the basis for a value-added application, automating quality control in a way that might not have been otherwise achievable – or not at such a low cost.

For more comprehensive treatment of filter applications, see either or both Knowledgebase documents:

Powering the lights – should the be voltage-driven or current-driven? How are LEDs powered? When to strobe vs running in continuous modes? How to integrate light controller with the camera and software. These are all worth understanding – or having someone in your team – whether in-house or a trusted partner – who does.

For comprehensive treatment of the topics summarized here, see Advanced Illumination’s “A Practical Guide to Machine Vision Lighting” in our Knowledgebase:

This blog is intended to whet the appetite for interest in lighting – but it only skims the surface. Machine vision lights as important as sensors and optics. Please download the guide linked just above – to deepen your knowledge. Or if you want help with a specific application, you may draw on the experience of our sales engineers and trusted partners.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of lenses, cables, NIC card and industrial computers, we can provide a full vision solution!