In any industrial camera application, one key setting is the exposure time of the camera. In cases where this is set arbitrarily, the resulting image maybe blurry due to movement of the scene we are imaging. To maximize our settings, we can calculate the minimum exposure time to eliminate blur and maximize our scene brightness. In this blog post, we will help understand the effects of exposure and calculate it for a given application.

First, let’s explain camera exposure. Exposure time for cameras, or shutter speed is the amount of time you let light fall on the image sensor. The longer the exposure time the more you ‘expose’ the sensor charging up the pixels to make them brighter. Shutter speeds are usually given as a fraction of second, like 1/60th, /125, 1/1000 of a second in photography cameras and come from the film days. In industrial cameras, exposure time is normally given in milliseconds, just the reciprocal of the shutter speed. (i.e. 1/60 sec = 0.0166 seconds or 16ms).

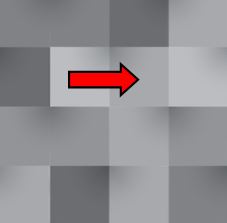

So how does this relate to blur? Blur is what you get when your object moves relative to the sensor and in turn moving across 2 or more pixels during the exposure time.

![]() You see this when you take a picture of something moving faster than the exposure time can fully stop the motion. In the image to the left, we have a crisp picture of the batter, but the ball is moving very fast causing it to appear blurry. The exposure in this case was taken at 1/500 sec (2 ms), but the ball moved many pixels during this exposure.

You see this when you take a picture of something moving faster than the exposure time can fully stop the motion. In the image to the left, we have a crisp picture of the batter, but the ball is moving very fast causing it to appear blurry. The exposure in this case was taken at 1/500 sec (2 ms), but the ball moved many pixels during this exposure.

The faster the shutter speed, the less chance the object moves much relative to where it started. In machine vision, cameras are fixed so they don’t move, but what we are worried about is the effect of the object moving during exposure time.

Depending on the application, it may or may not be sensitive to blur. For instance, say you have a camera that has a pixel array of 1280 pixels in the

x-axis, and your object on the sensor is 1000 pixels. During the exposure the object moves 1 pixel, it is now moved 1 pixel over to the right. It has moved 1 pixel out of 1000 pixels, This is what we call “pixel blur”. However, visibly you cannot notice this. If we have an application in which we’re just viewing a scene and no machine vision algorithms are making decisions on this image, if the object moves a very small fraction of the total object size during exposure, we probably don’t care!.

Now assume you are measuring this object using machine vision algorithms. Movement becomes more significant, because you now have uncertainty of the actual size of the object. However, if your tolerances are within 1/1000, you are OK. However, if your object was only 100 pixels, and it moved 1 pixel, from a viewing application this might still be fine, but from a measurement application, you are now off by 1%, and that might not be tolerable!

In most cases, we want crisp images with no pixel blur. The good part is this is relatively easy to calculate! To calculated blur, you need to know the following:

- Camera resolution in pixels (in direction of travel )

- Field of View (FOV),

- Speed of the object.

- Exposure time

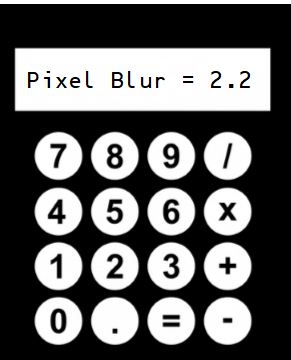

Then you can calculate how many pixels the object will move during the exposure using the following formula:

B = Vp * Te * Np / FOV

Where:

B = Blur in pixels

Vp = part velocity

FOV = Field of view in the direction of motion

Te = Exposure time in seconds

Np = number of pixels spanning the field of view

In the example above, Vp is 1 cm/sec, Te is 33ms, Np is 640 pixels and FOV is 10cm then:

B = 1 cm/sec * .033 sec * 640 pixels / 10cm = 2.1 pixels

In most cases, blurring becomes an issue past 1 pixel. In precision measurements, even 1 pixel of blur maybe too much and need to use a faster exposure time.

1st Vision has over 100 years of combined experience contact us to help you calculate the correct exposure

![]()

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera selection. With a large portfolio of lenses, cables, NIC card and industrial computers, we can provide a full vision solution!

Related Blog posts that you may also find helpful are below:

Imaging Basics: How to Calculate Resolution for Machine Vision