Many clients call us about doing measurements on grey scale data, but want to use a color machine vision industrial camera because they want the operator or client to see a more ‘realistic’ picture. For instance, if you are looking at PCBs, need to read characters with good precision, but also see the colors on a ribbon cable, you are forced to use a color camera.

Many clients call us about doing measurements on grey scale data, but want to use a color machine vision industrial camera because they want the operator or client to see a more ‘realistic’ picture. For instance, if you are looking at PCBs, need to read characters with good precision, but also see the colors on a ribbon cable, you are forced to use a color camera.

In these applications, you could take out a monochrome image from a color sensor for processing, and use the color for cataloging and visualization. But the question is, how much data is lost by using a color camera in mono mode?

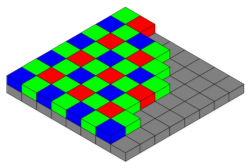

First, the user must understand how a color camera works, and how it gets its picture. Non 3-CCD cameras use a Bayer filter, which is a matrix of red, green, and blue filters over each pixel. For each group of 4 pixels, there are 2 greens, 1 red and 1 blue pixel. (The eye being most sensitive in Green, has more to simulate the response).

To get a color image out, each pixel out is a computation of a weighted sum of its nearest neighbor pixels which is known as Bayer interpolation. The accuracy of the color on these cameras is a result of what the original image was, and how the camera algorithms interpolated the set of red, green and blue values for each pixel.

To get monochrome out, one technique is to have the image broken down into Hue, Saturation, and Intensity, with the intensity taken as the grey scale value. Again, this is mathematical computation. The quality of the output is dependent upon the original image and the algorithms used to compute the output.

An image such as the above will give an algorithm a hard time as you are flipping between grey scale values of 0 and 255 for each pixel (assuming the check board lines up with each pixel). Since the output of each pixel is based on it’s nearest neighbors, you could be replacing a black pixel with 4 white ones!

On the other hand, if we had an image with a ramp of pixel values, in other words, each pixel was say 1 value less than the one next to it, the average of the the nearest neighbors would very close to the pixel it was replacing.

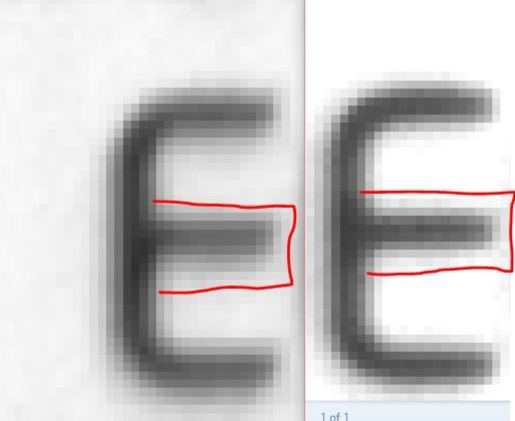

What does all this mean in real world applications? Let’s take a look at a 2 images, both from the same brand of camera where one is the using the 5MP Sony Pregius IMX250 monochrome sensor, the other is using the same sensor, but the color version. The images were taken with the same exposure and identical setup. So how do they compare when we blow them up to the pixel level after we take the monochrome output from the color camera and compare it to the monochrome camera?

In comparing the color image (Left), if you expand the picture, you can see that the middle of the E is wider. The transition is not as close to a step function as you would want it to be. The vertical cross section is about 11 pixels with more black than white. Comparing the monochrome image (Right), the vertical cross section is closer to about 8 pixels.

Conclusion:

If you need pixel level measurement, and there is no need for a color image, USE A MONOCHROME MACHINE VISION CAMERA.

If you need to do OCR (as in this example) the above images using color or monochrome would work just fine. This is given you have enough pixels to start and your spatial resolution is adequate.

CLICK HERE FOR A COMPLETE LIST OF MACHINE VISION CAMERAS

Do you lose 4x in resolution as some people claim? Not with the image I have used above. Maybe with the checkerboard pattern, but if you can have multiple pixels across your image to measure, you might be ok in with using a color camera and is really application dependent! This post is to make you aware of the resolution loss specifically and 1st Vision can help in making decisions by contacting us for a discussion.

1stVision is the leading provider of machine vision components and has a staff of experienced sales engineers to help discuss your application. Please do not hesitate to contact us to help you in calculating the resolution you need to calculating focal lengths for your application.

Related links and blog posts

How does 3CCD cameras improve color accuracy and spatial resolution over Bayer cameras

Calculating resolution for machine vision

Use the 1st Vision camera filters to help ID the desired camera