Do you seek a single-device solution for process monitoring – a video streaming/recording industrial camera without needing an additional PC? IDS uEye Live SCP | SLE compact industrial cameras enable monitoring tasks to be executed directly on the camera without the need for an additional PC.

If you aren’t using these yet…

Did you miss our blog that introduced them in late 2024? Did we highlight key features well enough:

Use cases – what would one do with this?

If you need to visualize, document, or monitor processes, this camera is quick to integrate and requires no programming. No PC is needed, as it’s a system on a chip, embedded in the camera.

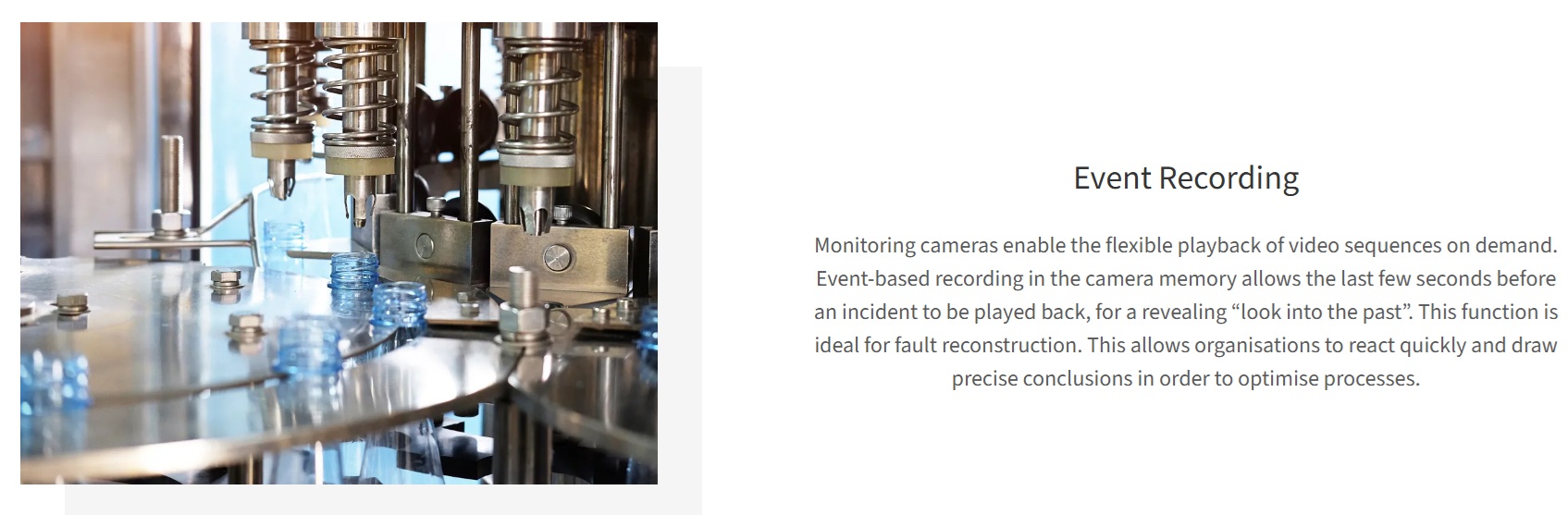

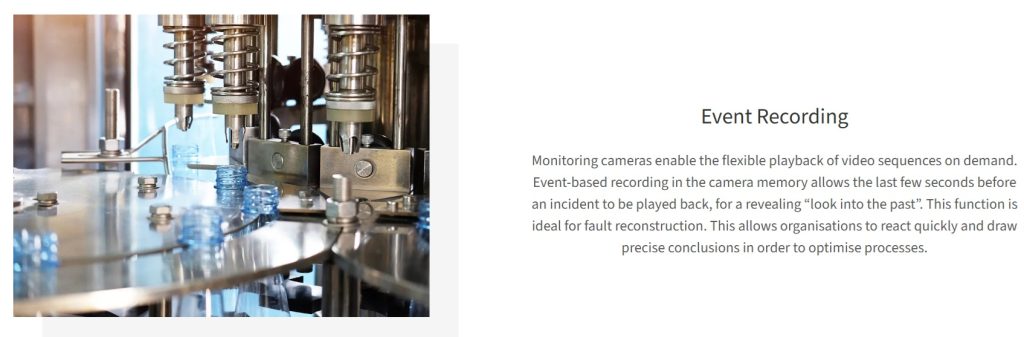

Just as vehicle dashcams and video doorbells capture sequences that are useful to have documented, it can be useful to capture industrial processing sequences that would otherwise have been missed.

Whether for quality control, process improvement, compliance requirements, or liability, videos of “where it went wrong” can be incredibly valuable. Using the event recording feature, one may have a lookback window of recorded streaming, in order to go back and replay the sequence, extract frames, etc.

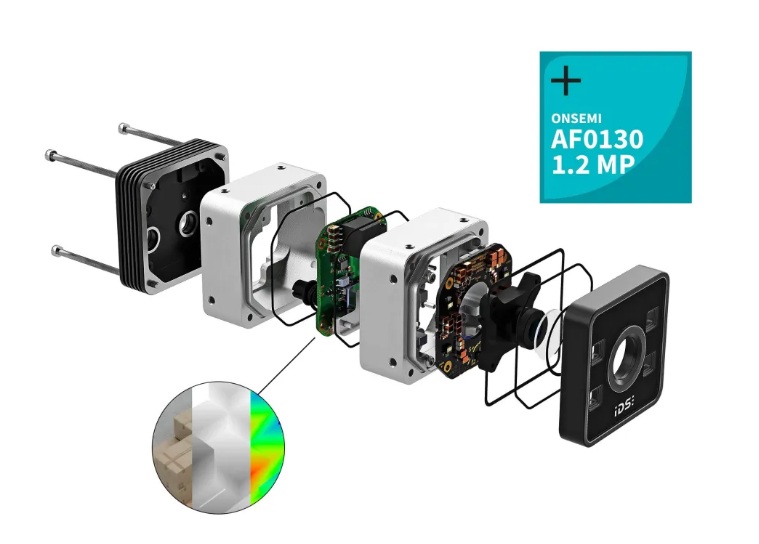

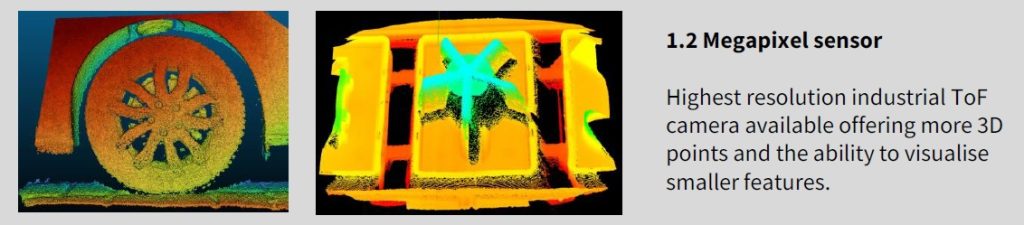

UEye SCP is the housed version:

It’s also available in space-saving board-level options (shown below), as well as a wash-down IP69K housed version (not shown here):

Process monitoring

Here’s a video focused on process monitoring applications with IDS uEye Live cameras. It’s the logical follow on video to the introductory video above:

Going further yet – from streaming to AI: scalable process monitoring

It doesn’t have to stop with “simple” industrial dashcam applications – though there’s nothing wrong with stopping there if you are getting good value. This segment goes on to introduce the concept of scaling up your processing monitoring, even to adding AI to interpret and act upon multiple live streams.

This video introduces how IDS industrial monitoring cameras enable scalable, network‑ready process monitoring – from simple streaming to advanced AI‑based analysis. You’ll learn how compressed video streaming, remote access and built‑in intelligence support a wide range of industrial and inspection tasks.

1st Vision’s sales engineers have over 100 years of combined experience to assist in your camera and components selection. With a large portfolio of cameras, lenses, cables, NIC cards and industrial computers, we can provide a full vision solution!

About you: We want to hear from you! We’ve built our brand on our know-how and like to educate the marketplace on imaging technology topics… What would you like to hear about?… Drop a line to info@1stvision.com with what topics you’d like to know more about.

#IDS

#uEyeLIVE

#industrialdashcam